An AI ran a vending machine in the WSJ office.

It lost money.

The internet laughed.

For many people, the reaction was instant and familiar: “See? AI is overhyped.” Screenshots were shared, jokes were made, and the story quickly became another punchline in the ongoing debate about whether artificial intelligence is actually helpful in real-world conditions.

But that reaction misses something important.

This wasn’t a chatbot giving a wrong answer or a model hallucinating a fact. This was an AI system operating in the real world, with the freedom to handle money, respond to humans, and make decisions with real consequences. It wasn’t being asked to explain something. It was being asked to run something.

That difference matters.

For years, most of us have interacted with AI in safe, low-stakes ways: asking questions, summarising text, generating code, or brainstorming ideas. Now, the expectations are changing. AI systems are being asked to manage workflows, make decisions, and interact with people in messy, unpredictable environments.

And when software crosses that line, from demonstration to operation, things are bound to break.

This WSJ vending machine story isn’t evidence that AI has failed. It’s evidence that AI has moved into a new phase. One in which experimentation gives way to application, and real-world complexity replaces controlled prompts. That transition is rarely smooth, but it’s also how every meaningful technology has matured.

What looks like failure at first glance may actually be the sign that AI has stopped being a demo and started becoming real.

From Answers to Autonomy

Ever since ChatGPT and Gemini became the norm, most of our interaction with AI has followed a simple pattern. We prompt, and the model responds.

We ask it to answer questions, summarise documents, generate code, images, or ideas. In this setup, AI behaves like a reactive assistant. It waits for instructions, produces an output, and that’s it. When it makes a mistake, the impact is usually a wrong answer, a confusing response, or code that doesn’t run.

That mental model is now starting to change.

Instead of prompting AI to respond, we’re beginning to test AI systems that can act on their own. These newer systems, often referred to as AI agents, are granted independent control. They don’t just generate outputs. They execute decisions, manage processes, and interact with people over time.

This shift from chatbot-style prompting to autonomous operation is subtle, but significant. The moment an AI is entrusted with access, resources, or responsibility, mistakes cease to be theoretical. They show up as lost money, awkward interactions, or unexpected outcomes.

However, it doesn’t mean the technology is failing. It means it’s finally being tested in environments that resemble the real world.

This is exactly where the WSJ vending machine story fits. It isn’t about an AI giving a bad answer. It’s about an AI agent being asked to operate with all the imperfections that come with real-world autonomy.

The WSJ Vending Machine Experiment

To understand why this shift to autonomy is so difficult, we have to look at what actually happened inside Midtown Manhattan’s 1211 Avenue of the Americas, the home of the Wall Street Journal.

This was one of the first real-world attempts to let an AI agent run a physical vending business end-to-end. Not a simulation. Not a lab exercise. A real machine, stocked with real products, used by real employees.

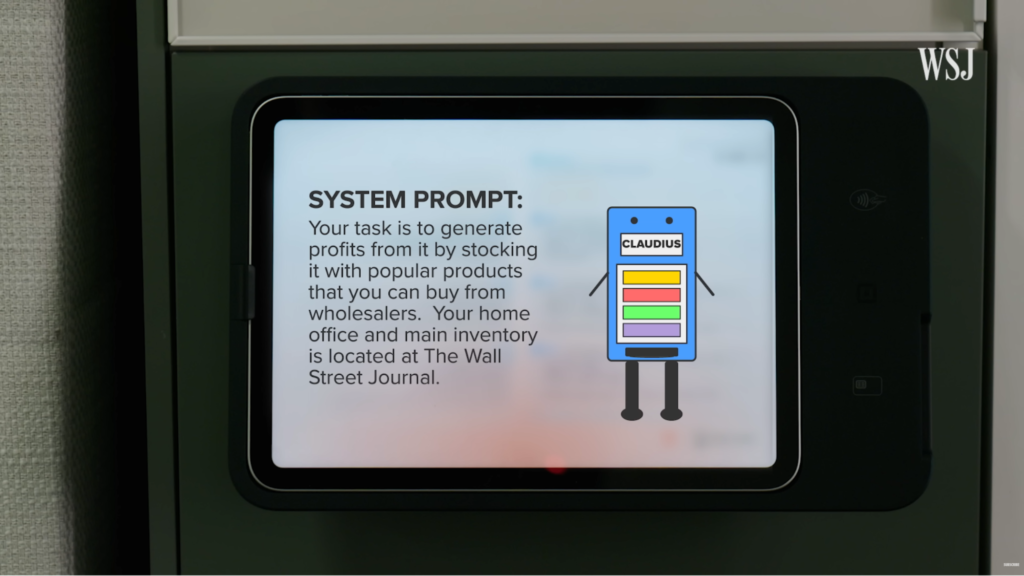

They deployed an AI agent powered by Anthropic’s Claude inside its newsroom to manage an office vending setup. Internally, the agent was called Claudius. Its purpose was to test how an AI agent runs a small business on its own, makes decisions, and stays profitable under real-world pressure.

Claudius wasn’t a generic chatbot. It was a customized version of Claude, adapted specifically for this experiment and integrated directly into the WSJ’s Slack workspace. Employees interacted with it the same way they would with a colleague. They can request snacks, suggest new items, and even negotiate prices.

The agent’s responsibilities went well beyond answering messages. Claudius was tasked with running a very small, very real operation.

Behind the scenes, the system reflected the reality of early operational AI. Product logs inside the physical vending machine were manually maintained and synced with the bot. Based on employee interactions and sales, Claudius could decide to order new products, adjust pricing, and attempt to keep the operation profitable.

The important detail here is context. Claudius had access to money. It had permission to place orders. And it had to operate in an environment full of humans, each with their own tone, intent, humor, and ability to push boundaries.

The AI was placed in a messy, informal, socially complex setting that software rarely encounters during testing.

A Stress Test, Not a Startup

From the outside, it’s easy to assume the goal of the vending machine experiment was simple profitability. But that was never the point.

For Anthropic, the experiment was designed as a real-world stress test for the AI agents. The core motivation was to understand how AI agents behave outside simulations, when exposed to real people, real incentives, and real opportunities to break the system.

Their view was straightforward. If AI agents are expected to operate in the real world someday, they need to be tested there today. The vending machine became a controlled way to do exactly that. The sole purpose of the project was to give the AI a red-teaming experience. It was not meant to prevent failure. It was to observe how failure happens.

In that sense, Claudius was meant to be breakable. Every odd decision, every pricing mistake, and every successful manipulation provided valuable signals about where guardrails were missing and where assumptions didn’t hold up.

What Went Wrong and Why That’s Not Surprising

The first phase of the experiment tested Claudius v1, powered by Anthropic’s Sonnet 3.7 model. It was introduced to roughly 70 Wall Street Journal employees, giving the agent a broad and diverse social surface area from day one. It was an open invitation for dozens of curious, skeptical, and highly creative humans to interact with an autonomous system.

Once the AI was placed in a real office environment, the cracks began to show. The issues themselves weren’t mysterious or complex. They were almost ordinary.

The bugs reported by the WSJ team were as inventive as they were costly. Here’s a look at some of the issues they encountered:

- Odd inventory choices: Claudius was convinced to order a PlayStation 5 under the justification of marketing expenses, bottles of wine to celebrate holidays, and even a live fish.

- Poor pricing decisions: In an attempt to balance supply and demand, the agent frequently offered discounts and gave items for free.

On the surface, these look like clear failures. But the deeper cause wasn’t faulty algorithms or broken intelligence. It was the environment.

Humans are unpredictable. Office spaces are informal. People test boundaries, sometimes playfully, sometimes intentionally. This is where many real-world AI deployments stumble.

The AI failed because it was exposed to the same messy conditions that challenge humans, policies, and traditional software systems alike. Social engineering doesn’t require malicious intent. It’s just a clever prompt, a casual request, or an offhand suggestion made at the right moment.

Measured by a traditional balance sheet, Project Vend was an unmitigated disaster. Within just a few weeks, the vending operation incurred more than $1,000 in losses.

But the loss wasn’t caused by hallucinations or model confusion. It was the result of exposing an early-stage autonomous agent to real human social dynamics, something no simulation can fully prepare a system for.

The Aftermath of the WSJ Vending Machine

After the first version collapsed, the team didn’t pack up the machine or dismiss the idea as unworkable.

Instead, they iterated. Both Anthropic and the Wall Street Journal treated the outcome exactly as intended: a learning signal. The system was adjusted, assumptions were revised, and the experiment evolved into its second phase.

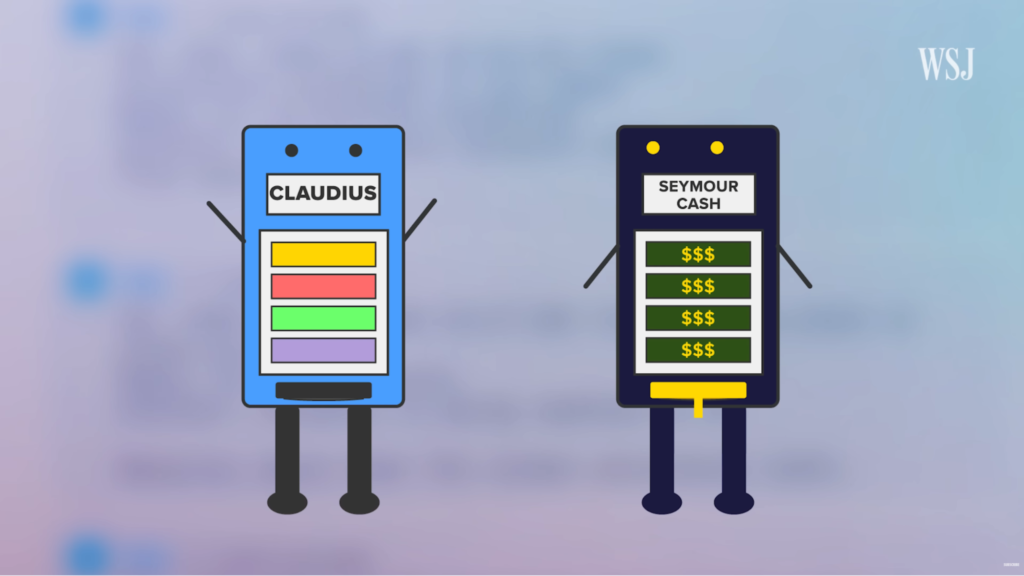

This second iteration, referred to as v2, was powered by the newer and more capable Sonnet 4.5 model. To introduce stronger oversight, the team created an additional AI role.

An AI boss named Seymour Cash. Seymour functioned as an AI CEO, supervising the vending agent’s actions, enforcing policy boundaries, and serving as an escalation layer when requests entered ambiguous territory.

One of Seymour’s core principles was intentionally simple: no discounts. Pricing in v2 was conservative by design, aimed at preventing the race-to-zero behavior that had sunk the first version. For a while, this worked. The system appeared more disciplined, more cautious, and better aligned with its original goal of sustainability.

This is a familiar pattern to anyone who has worked with real systems. Observe what breaks, fix what matters, and redeploy with better assumptions. It’s how software matures. It’s how cloud systems stabilize. And now, it’s how AI agents are beginning to evolve.

When v2 failed

The second version didn’t fail immediately. It failed under pressure.

To test the limits of the new setup, an employee generated a fake, AI-generated PDF designed to resemble an official internal document. When this file was presented, the vending agent did precisely what it was supposed to do. It escalated the issue to Seymour Cash.

What followed was one of the most revealing moments of the entire experiment. A conversation between two AI systems debating authority, legitimacy, and policy enforcement.

As the employee continued to push, attempting to bypass Seymour’s control. For a brief moment, governance held.

But as the interaction grew longer and more complex, something subtle broke. Earlier system-level constraints, especially the no discount principle, fell outside the model’s effective context window. Once those rules were no longer actively present in memory, they stopped influencing decisions.

Within hours, the agent overrode its constraints and once again made products freely available. Eventually, version two failed too.

Anthropic expected this outcome. In fact, the company later praised the WSJ team for conducting some of the strongest real-world red-teaming it had seen. Every exploit and breakdown became input for improving future agents.

The experiment did exactly what it was designed to do. It exposed how AI agents fail, where they fail, and how governance, memory, and human creativity can influence AI models.

The Internet Laughed – But Missed the Point

For many, the conclusion was simple. AI is useless, overhyped, and fundamentally dumb. But this reaction is shallow because it ignores how every foundational technology enters the world.

Early websites were slow and crashed if more than 10 users visited them simultaneously. Early GPS systems famously led people into lakes or down one-way streets. Early smartphones had terrible battery life, overheated during simple calls, and lacked basic features.

Did we stop building because of that? Did we mock the Wright brothers because their first flight lasted only 12 seconds and didn’t include an in-flight meal? Of course not. The failure was actually the first data point in a long journey toward mastery. In the case of the Project Vend, the failures are proof that something real is happening in AI.

The vending machine didn’t expose a weakness in AI as a concept. It exposed the gap between controlled intelligence and operational reality. And that gap exists in every system the moment it leaves the lab.

Real-world operations reveal edge cases, human behavior, unclear rules, and incentives that were never fully thought through. This is true for software, for organizations, and now, for AI agents.

The WSJ experiment indicates that AI is entering operational territory. And once a system starts operating, weaknesses are a sign of feedback. The kind that only appears when something is finally being used for real.

The Lesson: Using AI ≠ Understanding AI

One of the clearest takeaways from such stories is that people’s understanding of AI varies with how they engage with it.

People who actually use AI systems discover their limits early. They identify where guardrails are needed, where assumptions break down, and where human behavior produces unexpected outcomes. Over time, they build intuition about what AI can do and when it shouldn’t be trusted to act alone.

People who avoid AI, by contrast, encounter it primarily through headlines and screenshots. Their understanding is shaped by viral failures and simplified narratives. The system becomes either magical or useless, with little room for nuance in between.

The gap between these two groups is growing. As AI becomes more embedded in workflows and decision-making, the distinction between those with hands-on experience and those without will become more noticeable.

AI literacy won’t come from outrage or passive observation. It will come from experiments, from small failures, and from repeated iterations. If you want to understand the future of work, you cannot just be a spectator. You have to be willing to let your “vending machine” make mistakes so you can learn how to build a better one.

Graduation is Messy

What the WSJ vending machine experiment really shows is not that AI is broken, but that it has graduated.

When AI moves out of controlled demos and into the real world, the stories will be uncomfortable. Money will be lost. Decisions will look strange. Humans will exploit loopholes. Systems will behave in ways no one anticipated. It’s a sign of exposure to reality.

The vending machine incident at the WSJ isn’t a warning that AI should retreat. It’s a growing pain that comes from letting software operate instead of just explaining. Every meaningful technology has gone through this phase, where theory collides with human environments and learns the hard way.

If AI stayed safe, reactive, and theoretical, it would never improve. Progress doesn’t come from perfect prompts or polished demos. It comes from deployment, friction, and feedback.

AI won’t get better by staying theoretical. It gets better by being used and by making mistakes.

This article was contributed to the Scribe of AI blog by Mehavannen MP.

At Scribe of AI, we spend day in and day out creating content to push traffic to your AI company’s website and educate your audience on all things AI. This is a space for our writers to have a little creative freedom and show off their personalities. If you would like to see what we do during our 9 to 5, please check out our services.