Ever put milk in your fridge and then completely forgotten about it? I have. Or failed to notice the gas was leaking all over the place? I have not and hope I don’t. It’s not our fault. In our busy day-to-day struggles, we’re simply too busy to notice. But what if there were AI systems that could ‘notice’ these things and tell us about them? Alert us just in time to throw out the spoiled milk before it stinks up the place or handle the gas leak before … well, you know. That’s where Sensory AI comes in.

Sensory AI is considered to be one of the next leaps in artificial intelligence. These systems are trained to see, hear, touch, smell, and even taste (yes, you read that right) the world around them, including smelling spoiled milk or leaking gas. Seeing the potential of such technology, many companies have already started to adopt sensory AI. In fact, its market size has grown to a whopping $34.19 billion in 2025.

In this blog, we’ll explore the different types of sensory AI in detail and the techniques as well as the hardware behind them. We’ll also discuss how methods like multimodal fusion can join them together to create AI systems capable of processing many inputs at once. Let’s get started!

What is Sensory AI?

Sensory AIs are advanced AI systems that can interpret and respond to human sensory inputs, such as sight, sound, touch, smell, etc. They have advanced machine learning algorithms and state-of-the-art hardware sensors that allow them to process different sensory data. This helps the systems to interact with us humans more naturally and intuitively.

A great example of a sensory AI system is the Automatic Number Plate Recognition (ANPR) capability in security cameras. Camera systems with sight or vision can help detect and recognize number plates from vehicles in busy traffic. We’ll learn more about this in the next section.

Sensory AI works by using several technological components together. Let’s take a look at them:

- Sensors and Cameras: These devices capture sensory data from the environment, such as visual images, sounds, and tactile information.

- Machine Learning Algorithms: Algorithms process the sensory data to recognize patterns and make sense of the inputs.

- Multimodal Data Integration: Sensory AI systems combine data from multiple sensors to create a detailed understanding of the environment. This allows the AI to respond more accurately to complex stimuli.

- Actuators and Output Devices: They allow the AI system to interact with the physical environment and users, to provide feedback using speech, visual displays, or other physical actions. They’re very useful in robotics.

Next, let’s go through each of the senses one by one and discuss how AI collects and analyses the sensory data. We’ll start with sight first. Why? Because..

“Sight is the most perfect and most delightful of all our senses.” – Joseph Addison, British writer and politician.

Seeing: Computer Vision and More

Computer vision is a branch of AI that handles the analysis of visual data. In simple words, ‘it helps computers see’. It uses machine learning and neural network models to get meaningful information out of images or video frames. This includes tasks like object detection, face recognition, etc. It can even read text from images or scanned documents using a technique known as Optical Character Recognition (OCR). The ANPR system that we discussed earlier uses computer vision (OCR, to be more specific) to read and recognize texts or numbers from license plates. ChatGPT can do this as well. Upload an image containing text and check it out.

Computer vision works through machine learning models that go through two main phases: training and inference.

During training, a model is shown a large dataset of labeled images of different objects. For example, to teach it to recognize screwdrivers, the model is fed thousands of images of screwdrivers, different shapes, colors, and angles, as well as images of non-screwdrivers. Through repeated analysis, the model learns to identify patterns and features that distinguish a screwdriver from other objects.

Once trained, the model enters the inference stage, where it can be used to analyze new images and make predictions, like detecting and identifying a screwdriver in a photo it hasn’t seen before. Someday, this might help robots run away from you when you pick up a screwdriver.

Hearing: AI and Audio Perception

Similar to sight, hearing is another essential sense that humans have. The ability to hear something and understand it changed the course of our history, from creating languages to music. However, computers process audio a bit differently than we humans. We have ears, and computers have microphones.

Audio is first captured through a microphone and converted into digital form (typically 16–48 kHz). It’s then transformed into visual representations like spectrograms or Mel-frequency maps (heatmaps of frequency vs time), which show how sound frequencies change over time. These can help neural networks detect patterns in the ‘audio’.

Convolutional layers of the neural network pick out sound features, while recurrent or transformer layers understand how these features change over time. Models like wav2vec 2.0 are pre-trained on large amounts of unlabeled audio by learning to predict missing parts. Such techniques help build a strong understanding of sound. The model can then be fine-tuned for AI tasks like speech recognition. An AI assistant with such features can listen and create transcripts of boring meetings that go on for hours while you can chill on Netflix.

Smelling: Olfactory AI

Moving on to smell. Despite how important it is, it’s one of the least understood senses, especially in the digital world. Computers have been partially able to see and hear for a very long time. Yet, they are only just now figuring out how to take a sniff. The AI technique behind this achievement is known as Olfactory Intelligence.

Unlike vision (which uses three color channels – red, green, and blue) or sound (based on frequency), smell involves hundreds of receptors. This makes it far more complex to create a feature map, which is necessary for AI models to train. Traditional methods couldn’t digitize scent until neural networks stepped in.

Using graph neural networks, researchers built a map linking molecules to specific smells. This allowed computers to record, analyze, and recreate scents, much like images and audio. The process is similar to how we handle images: a device called GC-MS (gas chromatography-mass spectrometry) captures scent by breaking it into molecules, like a camera breaks an image into pixels. That data is then turned into a scent code that can be stored, edited, or reproduced digitally.

That digitized scent data can be used to train AI models, much like how labeled images or audio are used in computer vision or speech recognition.

Tasting: AI and Flavor Analysis

We humans taste using our primary taste organs, the tongue and the taste buds in it. Our taste buds contain special receptor cells that detect taste sensations. The five taste sensations are: sweet, sour, salty, bitter, and umami. When detected, the receptors transmit the sensory information to the brain, where the taste signal is understood.

Recreating this process for a computer system is a little tricky, but possible. Special hardware components like e-tongues are used here to collect taste data. Electronic tongues (e-tongues) work by using a sensor array coated with different chemical films, each designed to respond to specific taste molecules in a sample. It’s very similar to how human taste buds function.

When these sensors interact with food or drink compounds, they generate electrical signals that reflect the chemical makeup of that food. These signals are then processed and analyzed using different AI algorithms, which compare the patterns to known taste profiles to identify, classify, or evaluate the taste of new samples. With advancements in the future, these AI systems could rival Chef Gordon Ramsay. Hopefully, no one gets called ‘an idiot sandwich’.

The National Institute of Standards and Technology (NIST)’s wine-tasting AI is a great example of such a technology. It can taste and identify different types of wine with over 95% accuracy.

Touching: Tactile Perception in Robotics

“One touch is worth ten thousand words.” – Harold H Bloomfield, American psychiatrist and author.

The sense of touch provides us with information that’s not achievable through any other sense, for example, about the temperature of an object, its texture and weight, and sometimes even its state. Without it, even a simple handshake would’ve been meaningless. We have the largest organ in the human body, the Skin, to thank for the sense of touch.

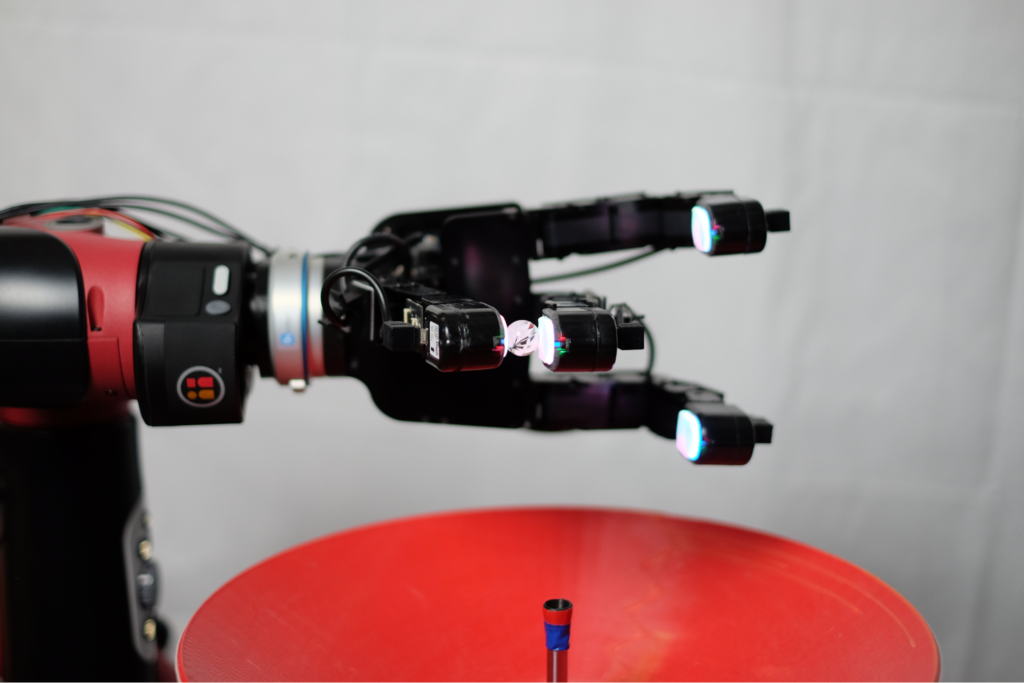

However, machines use a different method, known as tactile sensing, to mimic human-level touch. It’s especially useful in robotics to make them interact with the real world in a much more efficient manner.

To teach AI robots how to sense and learn from touch, we need to use sensors capable of mimicking it. These sensors are small, durable, and able to handle repeated use. They are also able to capture detailed information, like texture, pressure, and shape. A great example of such a sensor is the DIGIT sensor developed by Meta. With the data from such sensors, AI algorithms will analyse what kind of object the robot is dealing with and how to handle it reliably.

Sensor Fusion and Multimodal Learning

Now that we have explored all the senses and how AI and robotics can replicate them, let’s take a closer look at how all of them can be combined.

Combining all the different senses from different hardware sensors is known as sensor fusion, and it will give rise to multimodal AI (AI that can process multiple types of data). It allows machines to experience the real world much like we humans do. All the senses together will help paint a richer and more accurate picture of the real environment. This is key as the real world is noisy and complex, and it’s better to fuse multiple senses (data sources) than only rely on one.

Many professional sectors are looking into adopting multimodal AI for different use cases. In fact, the global multimodal AI market size is projected to reach around $10.89 billion by 2030.

Healthcare is a great example. Such systems can be used in hospitals and clinics by medical professionals to perform tasks like analyzing X-rays (sight), detecting tiny variations in heartbeats (hear), or even analyzing the presence of toxic materials, like alcohol, in the body (smell). For instance, smell-based AI systems can detect the presence of COVID-19.

Conclusion: Making AI Feel More Human

By replicating sight, sound, touch, smell, and taste, AI is no longer limited to abstract data; it can now interact with the real, physical world. From seeing and recognizing our faces to smelling spoiled milk or even tasting wine, sensory AI is bringing machines a step closer to experiencing the world as we do.

With improvements in new sensor technology, machine learning, and multimodal fusion, these systems are smarter, more intuitive, and are very useful across industries like healthcare, robotics, and food safety. As sensory AI continues to evolve, we’re not just teaching machines to understand us; we’re building technology that can experience sense with us and expand our own capabilities as humans.

This article was contributed to the Scribe of AI blog by Nabin Naseer.

At Scribe of AI, we spend day in and day out creating content to push traffic to your AI company’s website and educate your audience on all things AI. This is a space for our writers to have a little creative freedom and show-off their personalities. If you would like to see what we do during our 9 to 5, please check out our services.